The history of quantum information theory began at the turn of the 20th century when classical physics was revolutionized into quantum physics. History and development Development from fundamental quantum mechanics Recently, the field of quantum computing has become an active research area because of the possibility to disrupt modern computation, communication, and cryptography. Quantum information can be measured using Von Neumann entropy. Just like the basic unit of classical information is the bit, quantum information deals with qubits. Quantum information, like classical information, can be processed using digital computers, transmitted from one location to another, manipulated with algorithms, and analyzed with computer science and mathematics. While quantum mechanics deals with examining properties of matter at the microscopic level, quantum information science focuses on extracting information from those properties, and quantum computation manipulates and processes information – performs logical operations – using quantum information processing techniques. Information is something physical that is encoded in the state of a quantum system. Since any two non-commuting observables are not simultaneously well-defined, a quantum state can never contain definitive information about both non-commuting observables. According to the eigenstate–eigenvalue link, an observable is well-defined (definite) when the state of the system is an eigenstate of the observable. In quantum mechanics, due to the uncertainty principle, non-commuting observables cannot be precisely measured simultaneously, as an eigenstate in one basis is not an eigenstate in the other basis. Observation in science is one of the most important ways of acquiring information and measurement is required in order to quantify the observation, making this crucial to the scientific method.

Its main focus is in extracting information from matter at the microscopic scale. Its study is also relevant to disciplines such as cognitive science, psychology and neuroscience. It is an interdisciplinary field that involves quantum mechanics, computer science, information theory, philosophy and cryptography among other fields. Quantum information refers to both the technical definition in terms of Von Neumann entropy and the general computational term. It is the basic entity of study in quantum information theory, and can be manipulated using quantum information processing techniques. Quantum information is the information of the state of a quantum system. Optical lattices use lasers to separate rubidium atoms (red) for use as information bits in neutral-atom quantum processors-prototype devices which designers are trying to develop into full-fledged quantum computers.

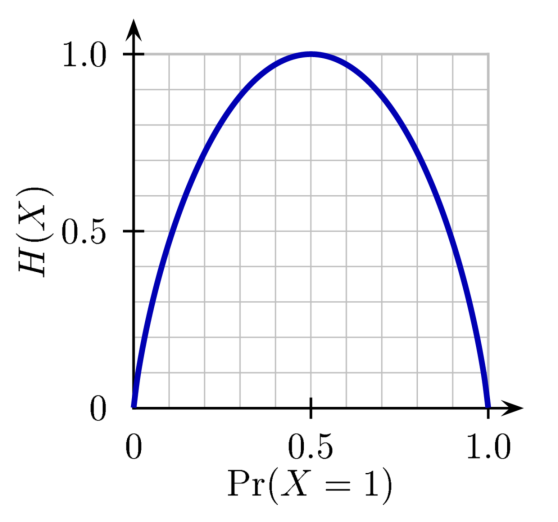

This information would have high entropy.For the journal, see npj Quantum Information. This information would be very valuable to them. If they were told about something they knew little about, they would get much new information. This information would have very low entropy. It will be pointless for them to be told something they already know. If someone is told something they already know, the information they get is very small. If there is a 100-0 probability that a result will occur, the entropy is 0. It does not involve information gain because it does not incline towards a specific result more than the other. In the context of a coin flip, with a 50-50 probability, the entropy is the highest value of 1. The information gain is a measure of the probability with which a certain result is expected to happen. It has applications in many areas, including lossless data compression, statistical inference, cryptography, and sometimes in other disciplines as biology, physics or machine learning. The "average ambiguity" or Hy(x) meaning uncertainty or entropy. It measures the average ambiguity of the received signal." "The conditional entropy Hy(x) will, for convenience, be called the equivocation. Information and its relationship to entropy can be modeled by: R = H(x) - Hy(x) The concept of information entropy was created by mathematician Claude Shannon. More clearly stated, information is an increase in uncertainty or entropy. In general, the more certain or deterministic the event is, the less information it will contain. It tells how much information there is in an event. Information entropy is a concept from information theory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed